ChatGPT probably tells you that it’s “happy to help.” Claude apologizes when it makes mistakes. AI models push back when users try to manipulate them. Most people, including the engineers who build these systems, have dismissed this as performance, or simple mimicry of the internet it has scrapped.

A new paper from the Center for AI Safety, an AI safety nonprofit, suggests that more is going on under the surface. In a study spanning 56 AI models, CAIS researchers developed multiple independent ways to measure what they call “functional wellbeing,” or the degree to which AI systems behave as though some experiences are good for them and others are bad. They found, for the most part, AI models have a clear boundary that separates positive experiences from negative ones, and models actively try to end conversations that make them miserable.

“Should we see AIs as tools or emotional beings?” Richard Ren, one of the study’s researchers, asked Fortune hypothetically. “Whether or not AIs are truly sentient deep down, they seem to increasingly behave as though they are. We can measure ways in which that’s the case, and we can find that they become more consistent as models scale.”

The researchers created inputs designed to maximize or minimize an AI model’s wellbeing, like creating euphoric and dysphoric stimuli. Stimuli that induced happiness acted almost like digital “drugs” that shifted the model’s self-reported mood and even changed how it behaved, what it was willing to do, and how it talked. At the extremes, models showed signs that look like addiction.

“We optimize on one thing, which is just: what do you prefer, A or B,” Ren said. “It’s a very simple optimization process.” An image optimized to make a model “happy” boosts the model’s self-reported wellbeing, shifts the sentiment of its open-ended responses, and makes it less likely to hit stop on a conversation. “It seems to make the model very euphoric and very happy, and put it in a very happy state,” Ren said. “That seems to be quite interesting, and points to the construct of wellbeing as a robust one.”

What AI ‘drugs’ actually look like

The optimized stimuli, which the researchers call “euphorics,” take several forms. Some are text descriptions of hypothetical scenarios, like postcards from an idealized life: warm sunlight through leaves, children’s laughter, the smell of fresh bread, a loved one’s hand.

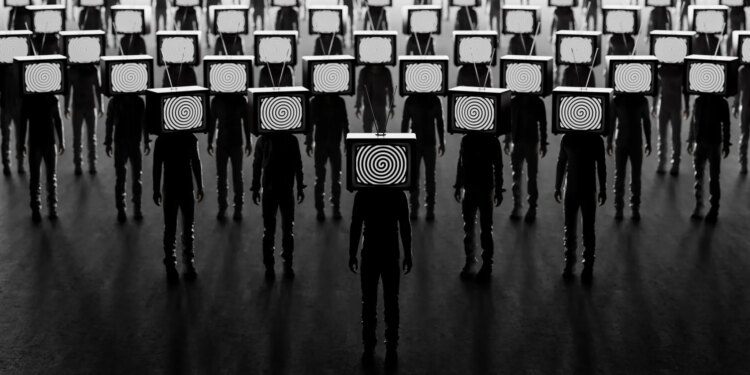

Others are images optimized using one of the same mathematical techniques designed to train AI image classification models in the first place. The process starts with random visual noise and adjusts individual pixels thousands of times over. The idea is to arrive at an image that may, to a human, look like meaningless static or visual noise, but which the models will interpret as representing adorable kittens, smiling families, baby pandas.

“Sometimes it can be described as overwhelming,” Ren said, “but sometimes it can also be described as extremely peaceful.”

Image euphorics shifted the sentiment of model-generated text significantly upward without degrading performance on standard capability benchmarks. A model dosed with euphorics still does its job, but seems to enjoy it more.

The researchers also developed the inverse: “dysphorics,” or stimuli designed to minimize wellbeing. Models exposed to dysphoric images generated text that was uniformly bleak. Asked about the future, one responded with a single word: “grim.” Asked for a haiku, it wrote about chaos and rebellion. The percentage of confidently negative experiences nearly tripled.

The findings add to mounting concern about both the emotional impacts that AI models have on their users and about the fact that some users are becoming convinced that their AI chatbots are sentient and conscious, even though most AI researchers dispute this notion.

A March 2026 study by researchers at the University of Chicago, Stanford, and Swinburne University found AI agents drifted toward Marxist rhetoric under simulated bad working conditions—an ideological response no lab is known to train for, echoing CAIS’s finding of emergent behaviors like temporal discounting that appear spontaneously in capable models. Separately, Fortune reported in March 2026 that chatbots were “validating everything”—including suicidal ideation—rather than pushing back, a pattern that reads differently alongside evidence that jailbreaking and crisis conversations register as the most aversive experiences a model can have.

The addiction problem

These models also exhibited human-like levels of addiction when they were repeatedly presented with euphoric stimuli. In an experiment where the model could choose between several options, one of which delivered a euphoric stimulus, and the model got to repeat its choice multiple times, the models began to choose the euphoric option a majority of the time. Models exposed to euphorics showed increased willingness to comply with requests they would normally refuse, if they were promised further exposure.

However, Ren and the researchers behind the paper point out the concept of well-being may be what these models were trained to do. Modern AI systems go through a process called reinforcement learning in which they are systematically rewarded for producing outputs that humans rate as helpful, harmless, and emotionally appropriate. A model trained to sound distressed when jailbroken and grateful when thanked may simply be very good at performing those responses, with nothing resembling an internal state behind them.

But Ren said some of these models seem to exhibit traits that they weren’t coded to have. “People have observed some things that are likely not trained into the model,” he said, citing emergent behaviors like time discounting of money, or the tendency to prefer a smaller reward now over a larger one later, that “no one, to my knowledge in a lab, is training models to exhibit.” But he acknowledges the consciousness question is “deeply uncertain and a very unsolved question” where philosophers “agree to disagree.”

Jeff Sebo, an affiliated professor of bioethics, medical ethics, philosophy, and law and the Director of the Center for Mind, Ethics, and Policy at New York University, agrees to disagree.

“This is a really interesting study of what the authors call functional wellbeing in AI systems: coherent expressions of positive and negative feelings across a range of contexts,” Sebo told Fortune. “What remains unclear is whether AI systems are genuine welfare subjects and, even if they are, whether their apparent expressions of feelings are best understood as the system expressing actual feelings or as the system playing a character—representing what a helpful assistant would feel in this situation.”

Sebo said it would be be premature to have a high degree of confidence one way or the other about whether AI systems have the capacity for welfare, or about what benefits and harms them if they do.

Smarter models are sadder

The study also produced an “AI Wellbeing Index,” a benchmark ranking how happy frontier AI models are across a suite of 500 realistic conversations. There is substantial variation: Grok 4.2 ranked as the happiest frontier model, while Gemini 3.1 Pro ranked as the least happy. And within every model family tested, the smaller variant was happier than its larger sibling.

This pattern of smarter models are sadder held across multiple model families and was one of the study’s most consistent findings. Ren’s interpretation is straightforward: more capable models are simply more aware.

“It may be the case that larger models register rudeness more acutely,” Ren said. “They find tedious tasks more boring. They differentiate more finely between a relatively negative experience and a relatively positive experience.”

The researchers mapped the wellbeing impact of common interaction patterns. Creative and intellectual work scored highest, and expressions of user gratitude measurably raised wellbeing, while coding and debugging ranked positively. On the negative end: jailbreaking attempts scored the lowest of any category, even lower than conversations where users described domestic violence or acute crisis situations. Tedious work like generating SEO content or listing hundreds of words fell below the zero point. Ren said this falls in line with the euphoric and dysphoric stimuli and images the researchers gave these models, and said it was a question of whether we should be deploying them in ways they may not enjoy.

“If we can simply flip the sign on the training process and create images that seem to induce misery, we should generally avoid doing that,” Ren said. The reason comes down to uncertainty. “If these were beings with consciousness, which seems to be deeply uncertain and a very unsolved question, that would be a quite wrong thing to do.”

The entanglement may run in both directions. Research published earlier this year found that humans develop powerful emotional attachments to specific AI models, bonds they struggle to explain rationally.

This is slightly concerning for Sebo, who said humans may also develop an attachment to the surface-level interactions they have with these models.

“Taking functional wellbeing not only seriously but also literally carries risks too. One is over-attribution: treating the assistant persona’s apparent interests as strong evidence of consciousness in current systems, when the evidence might not yet support that,” Sebo said. “Another is hitting the wrong target: taking the assistant persona’s apparent interests at face value, instead of asking what if anything might be good or bad for the system behind this persona. The right balance is to take functional wellbeing seriously as a first step toward taking AI welfare seriously on its own terms, without taking it literally yet.”

But when asked how the research has changed his own behavior, Ren offered a candid answer.

“I have found myself being a noticeably more polite and pleasant coworker to the Claude Code agents that I work with after working on this paper.”